One piece of general advice that I offer to fellow scientists is to not let the fact that an article has been published in Nature (or any other ‘elite’ journal for that matter) cause you to switch off your critical thinking skills while reading it and the BW2014 article (Chemistry: Chemical con artists foil drug discovery) that I’ll be reviewing in this post is an excellent case in point. My main criticism of BW2014 that is that the rhetoric is not supported by data and I’ve always seen the article as something of a propaganda piece.

One observation that I’ll make before starting my review of BW2014 is that what lawyers would call ‘standard of proof’ varies according to whether you’re saying something good about a compound or something bad. For example, I would expect a competent peer reviewer to insist on measured IC50 values if I had described compounds as inhibitors of an enzyme in a manuscript. However, it appears to be acceptable, even in top journals, to describe compounds as PAINS without having to provide any experimental evidence that they actually exhibit some type of nuisance behavior (let alone pan-assay interference). I see a tendency in the ‘compound quality’ field for opinions to be stated as facts and reading some of the relevant literature leaves me with the impression that some in the field have lost the ability to distinguish what they know from what they believe.

BW2014 has been heavily cited in the drug discovery literature (it was cited as the first reference in the ACS assay interference editorial which I reviewed in K2017) despite providing little in the way of practical advice for dealing with nuisance behavior. B2014 appears to exert a particularly strong influence on the Chemical Probes Community having been cited by the A2015, BW2017, AW2022 and A2022 articles as well as in the Toxicophores and PAINS Alerts section of the Chemical Probes Portal. Given the commitment of the Chemical Probes Community to open science, their enthusiasm for the PAINS substructure model introduced in BH2010 (New Substructure Filters for Removal of Pan Assay Interference Compounds (PAINS) from Screening Libraries and for Their Exclusion in Bioassays) is somewhat perplexing since neither the assay data nor the associated chemical structures were disclosed. My advice to the Chemical Probes Community is to let go of PAINS filters.

Before discussing BW2014, I’ll say a bit about high-throughput screening (HTS) which emerged three decades ago as a lead discovery paradigm. From the early days of HTS it was clear, at least to those who were analyzing the output from the screens, that not every hit smelt of roses. Here’s what I wrote in K2017:

Although poor physicochemical properties were partially blamed (3) for the unattractive nature and promiscuous behavior of many HTS hits, it was also recognized that some of the problems were likely to be due to the presence of particular substructures in the molecular structures of offending compounds. In particular, medicinal chemists working up HTS results became wary of compounds whose molecular structures suggested reactivity, instability, accessible redox chemistry or strong absorption in the visible spectrum as well as solutions that were brightly colored. While it has always been relatively easy to opine that a molecular structure ‘looks ugly’, it is much more difficult to demonstrate that a compound is actually behaving badly in an assay.

It has long been recognized that it is prudent to treat frequent-hitters (compounds that hit in multiple assays) with caution when analysing HTS output. In K2017 I discussed two general types of behavior that can cause compounds to hit in multiple assays: Type 1 (assay result gives an incorrect indication of the extent to which the compound affects target function) and Type 2 (compound acts on target by undesirable mechanism of action (MoA)). Type 1 behavior is typically the result of interference with the assay read-out and the hits in question can be accurately described as ‘false positives’ because the effects on the target are not real. Type 1 behaviour should be regarded as a problem with the assay (rather than with the compound) and, provided that the activity of a compound has been established using a read-out for which interference is not a problem, interference with other read-outs is irrelevant. In contrast, Type 2 behavior should be regarded as a problem with the compound (rather than with the assay) and an undesirable MoA should always be a show-stopper.

Interference with read-out and undesirable MoAs can both cause compounds to hit in multiple assays. However, these two types of bad behavior can still cause big problems whether or not the compounds are observed to be frequent-hitters. Interference with read-out and undesirable MoAs are very different problems in drug discovery and the failure to recognize this point is a serious deficiency that is shared by BW2014 and BH2010.

Although I’ve criticized the use of PAINS filters there is no suggestion that compounds matching PAINS substructures are necessarily benign (many of the PAINS substructures look distinctly unwholesome to me). I have no problem whatsoever with people expressing opinions as to the suitability of compounds for screening provided that the opinions are not presented as facts. In my view the chemical con-artistry of PAINS filters is not that benign compounds have been denounced but the implication that PAINS filters are based on relevant experimental data.

Given that the PAINS filters form the basis of a cheminformatic model that is touted for prediction of pan-assay interference, one could be forgiven for thinking that the model had been trained using experimental observations of pan-assay interference. This is not so, however, and the data that form the basis of the PAINS filter model actually consist of the output of six assays that each use the AlphaScreen read-out. As noted in K2017, a panel of six assays using the same read-out would appear to be a suboptimal design of an experiment to observe pan assay interference. Putting this in perspective, P2006 (An Empirical Process for the Design of High-Throughput Screening Deck Filters) which was based on analysis of the output from 362 assays had actually been published four years before BH2010.

After a bit of a preamble, I need to get back to reviewing BW2014 and my view is that readers of the article who didn’t know better could easily conclude that drug discovery scientists were completely unaware of the problems associated with misleading HTS assay results before the re-branding of frequent-hittters as PAINS in BH2010. Given that M2003 had been published over a decade previously. I was rather surprised that BW2014 had not cited a single article about how colloidal aggregation can foil drug discovery. Furthermore, it had been known (see FS2006) for years before the publication of BH2010 that the importance of colloidal aggregation could be assessed by running assays in the presence of detergent.

I'll be commenting directly on the text of BW2014 for the remainder of the post (my comments are italicized in red).

Most PAINS function as reactive chemicals rather than discriminating drugs. [It is unclear here whether “PAINS” refers to compounds that have been shown by experiment to exhibit pan-assay interference or simply compounds that share structural features with compounds (chemical structures not disclosed) claimed to be frequent-hitters in the BH2010 assay panel. In any case, sweeping generalizations like this do need to be backed with evidence. I do not consider it valid to present observations of frequent-hitter behavior as evidence that compounds are functioning as reactive chemicals in assays.] They give false readouts in a variety of ways. Some are fluorescent or strongly coloured. In certain assays, they give a positive signal even when no protein is present. [The BW2014 authors appear to be confusing physical phenomena such as fluorescence with chemical reactivity.]

Some of the compounds that should ring the most warning bells are toxoflavin and polyhydroxylated natural phytochemicals such as curcumin, EGCG (epigallocatechin gallate), genistein and resveratrol. These, their analogues and similar natural products persist in being followed up as drug leads and used as ‘positive’ controls even though their promiscuous actions are well-documented (8,9). [Toxoflavin is not mentioned in either Ref8 or Ref9 although T2004 would have been a relevant reference for this compound. Ref8 only discusses curcumin and I do not consider that the article documents the promiscuous actions of this compound. Proper documentation of the promiscuity of a compound would require details of the targets that were hit, the targets that were not hit and the concentration(s) at which the compound was assayed. The effects of curcumin, EGCG (epigallocatechin gallate), genistein and resveratrol on four membrane proteins were reported in Ref9 and these effects would raise doubts about activity for any of these compounds (or their close structural analogs) that had been observed in a cell-based assay. However, I don’t consider that it would be valid to use the results given in Ref9 to cast doubt on biological activity measured in an assay that was not cell-based.]

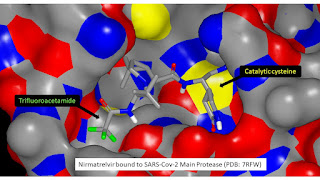

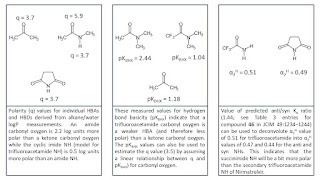

Rhodanines exemplify the extent of the problem. [Rhodanines are specifically discussed in K2017 in which I suggest that the most plausible explanation for the frequent-hitter behavior observed for rhodanines in the BH2010 panel of six AlphaScreen assays is that the singly-connected sulfur reacts with singlet oxygen (this reactivity has been reported for compounds with thiocarbonyl groups in their molecular structures).] A literature search reveals 2,132 rhodanines reported as having biological activity in 410 papers, from some 290 organizations of which only 24 are commercial companies. [Consider what the literature search would have revealed if the target substructure had been ‘benzene ring’ rather than ‘rhodanine’? As discussed in this post the B2023 study presented the diversity of targets hit by compounds incorporating a fused tetrahydroquinolines in their molecular structures as ‘evidence’ for pan-assay interference by compounds based on this scaffold.] The academic publications generally paint rhodanines as promising for therapeutic development. In a rare example of good practice, one of these publications (10) (by the drug company Bristol-Myers Squibb) warns researchers that these types of compound undergo light-induced reactions that irreversibly modify proteins. [The C2001 study (Photochemically enhanced binding of small molecules to the tumor necrosis factor receptor-1 inhibits the binding of TNF-α) is actually a more relevant reference since it focuses of the nature of the photochemically enhanced binding. The structure of the complex of TNFRc1 with one of the compounds studied (IV703; see graphic below) showed a covalent bond between one of carbon atoms of the pendant nitrophenyl and the backbone amide nitrogen of A62. The structure of the IV703–TNFRc1 complex shows that a covalent bond between pendant aromatic ring must also be considered as a distinct possiblity for the rhodanines reported in Ref10 and C2001.] It is hard to imagine how such a mechanism could be optimized to produce a drug or tool. Yet this paper is almost never cited by publications that assume that rhodanines are behaving in a drug-like manner. [It would be prudent to cite M2012 (Privileged Scaffolds or Promiscuous Binders: A Comparative Study on Rhodanines and Related Heterocycles in Medicinal Chemistry) if denouncing fellow drug discovery scientists for failure to cite Ref10.]

In a move partially implemented to help editors and manuscript reviewers to rid the literature of PAINS (among other things), the Journal of Medicinal Chemistry encourages the inclusion of computer-readable molecular structures in the supporting information of submitted manuscripts, easing the use of automated filters to identify compounds’ liabilities. [I would be extremely surprised if ridding the literature of PAINS was considered by the JMC Editors when they decided to implement a requirement that authors include computer-readable molecular structures in the supporting information of submitted manuscripts. In any case, claims such as this do need to be supported by evidence.] We encourage other journals to do the same. We also suggest that authors who have reported PAINS as potential tool compounds follow up their original reports with studies confirming the subversive action of these molecules. [I’ve always found this statement bizarre since the BW2014 authors appear to be suggesting that that authors who have reported PAINS as potential tool compounds should confirm something that they have not observed and which may not even have occurred. When using the term “PAINS” do the BW2014 authors mean compounds that have actually been shown to exhibit pan-assay interference or compounds that that share structural features with compounds that were claimed to exhibit frequent-hitter behavior in the BH2010 assay panel? Would interference in with the AlphaScreen read-out by a singlet oxygen quencher be regarded as a subversive action by a molecule in situations when a read-out other than AlphaScreen had been used?] Labelling these compounds clearly should decrease futile attempts to optimize them and discourage chemical vendors from selling them to biologists as valid tools. [The real problem here is compounds being sold as tools in the absence of the measured data that is needed to support the use of the compounds for this purpose. Matches with PAINS substructures would not rule out the use of a compound as a tool if the appropriate package of measured data is available. In contrast, a compound that does not match any PAINS substructures cannot be regarded as an acceptable tool if the appropriate package of measured data is not available. Put more bluntly, you’re hardly going to be able to generate the package of measured data if the compound is as bad as PAINS filter advocates say it is.]

Box: PAINS-proof drug discovery

Check the literature. [It’s always a good idea to check the literature but the failure of the BW2014 authors to cite a single colloidal aggregation article such as M2003 suggests that perhaps they should be following this advice rather than giving it. My view is that the literature on scavenging and quenching of singlet oxygen was treated in a cursory manner in BH2010 (see earlier comment in connection with rhodanines).] Search by both chemical similarity and substructure to see if a hit interacts with unrelated proteins or has been implicated in non-drug-like mechanisms. [Chemical similarity and substructure search will identify analogs of hits and it is actually the exact match structural search that you need do in order to see if a particular compound is a hit in assays against unrelated proteins.] Online services such as SciFinder, Reaxys, BadApple or PubChem can assist in the check for compounds (or classes of compound) that are notorious for interfering with assays. [I generally recommend ChEMBL as a source of bioactivity data.]

Assess assays. For each hit, conduct at least one assay that detects activity with a different readout. [This will only detect problems associated with interference with read-out. As discussed in S2009 it may be possible to assess and even correct for interference with read-out without having to run an assay with a different read-out.] Be wary of compounds that do not show activity in both assays. If possible, assess binding directly, with a technique such as surface plasmon resonance. [SPR can also provide information about MoA since association, dissociation and stoichiometry can all be observed directly using this detection technology.]

That concludes blogging for 2023 and many thanks to anybody who has read any of the posts this year. For too many people Planet Earth is not a very nice place to be right now and my new year wish is for a kinder, happier and more peaceful world in 2024.